Latest News

FACEIT’s AI admin tackling toxic behaviour learns to detect verbal toxicity and mic spam

FACEIT, the makers of Minerva, the first artificial intelligence powered admin, can reveal her upgraded capabilities in the fight against toxic behaviour in competitive games. Minerva can now hear and is able to understand voice messages in all languages. Detecting toxic behaviour on voice chat including verbal toxicity and mic spam.

- Last year FACEIT embarked on a journey to reduce toxic behaviour in online multiplayer games by releasing Minerva, an artificial intelligence powered admin, able to detect and take action against toxic messages at infinite scale. According to surveys of FACEIT users, anti-toxicity solutions were one of the most requested features.

- Since launch she has:

- Analysed more than 1.4bn chat messages

- Detected more than 1.9 million toxic messages and issues warnings

- Banned over 100k players

- As a result, the FACEIT platform has seen:

- 21% fewer toxic messages sent

- 62% reduction in seriously offensive messages being sent

- The first iteration of Minerva began by analysing thousands of text chat messages to detect abusive language and toxic behaviour, but there were other forms of toxicity relating to gameplay she was unable to detect including; players acting as obstacles for teammates, staying AFK, deliberate friendly fire, blocking, griefing and potential offensive and abusive language via voice channels

- To detect and take action against negative behaviours in gameplay, FACEIT launched Justice, a community-driven portal, that thanks to the endless effort of many members of the community, has managed to resolve more than 60,000 cases, issuing almost 30,000 punishments since it’s launch in April.

- The FACEIT community also fed back that text detection didn’t go far enough thus prompting the development in Minerva’s ability to listen. Now she is able to analyse audio sources of data and detect two different types of potential abuse: toxic verbal messages and microphone spamming.

- Minerva is able to analyse full conversations among players during a match. An important aspect that makes the overall process more reliable and less prone to misleading interpretations that could come out by analyzing single and isolated messages.

- In addition to detecting potential toxic voice messages Minerva is able to detect and sanction other forms of repetitive annoying user behaviours and sounds, which could worsen their teammates’ ability to concentrate and make the overall experience less enjoyable such as screaming or excessively loud music. To achieve this, Minerva reviews the audio file of the match and by applying a CNN (Convolutional Neural Network) technology, she can recognise a series of negative behaviours and issue either a warning or a ban in case the player has been caught multiple times.

To find out more about Minerva please head to https://fce.gg/minerva-gets-ears

Powered by WPeMatico

Latest News

Report affirms Ygam’s leading role in gambling harm prevention

The latest Ygam impact report reiterates the integral role that the charity plays in preventing gaming and gambling harms among young people.

Between January 2024 to March 2025, Ygam trained nearly 10,000 delegates and reached and estimated 1.3 million children and young people across the UK – the highest reach figure since its inception in 2014. Recognised as the UK’s leading charity dedicated to prevention gambling harms among young people, Ygam has continued to set the standard in the sector.

The charity has significantly strengthened its focus on data, evaluation, and evidence-based practice, commissioning independent evaluations of four of its flagship programmes. These evaluations have generated robust insights into the long-term effectiveness of Ygam’s approach and are helping to shape the future of prevention education across the UK.

This report brings together a rich body of evidence, including independent evaluations, pre- and post-training feedback, and delegate testimonials – compiled to inform strategic direction and long-term effect.

Ygam’s growing prominence is reflected in its expanding reputation and influence across the youth sector. The charity continues to build strong partnerships with schools, universities, youth organisations, and community groups, ensuring its resources are embedded where they can make the greatest impact. Ygam is now working with esteemed brands including The Scouts, NSPCC, The Children’s Society, TSB Bank, Place2Be, and Barnardo’s.

The charity’s work has also been praised by Gambling Minister Baroness Twycross, who welcomed the publication of the report.

Parliamentary Under-Secretary of State at the Department for Culture, Media and Sport, Baroness Twycross, said: “I welcome this report, which highlights Ygam’s vital role in educating more than one million young people on how to lead safer digital lives.

One of my key priorities as gambling minister is to strengthen protections around those most vulnerable to harmful gambling and I look forward to collaborating with Ygam in future as we continue to build a safer online space for young people.”

Helen Martin, Chief Operating Officer and Interim Chief Executive at Ygam, said: “I’m incredibly proud to present this impact report, which highlights Ygam’s leading role in the prevention field and our recognised expertise in safeguarding children and young people. Central to our success is a strong commitment to collaboration and the transformative power of partnership.

I’m delighted with the strides we’ve made in evaluating our work. While our reach figures are impressive, they represent just one facet of the significant impact we are achieving. By investing time and resources in rigorous evaluation, we ensure our programmes are not only evidence-based but also exemplify best-in-class standards and deliver lasting impact.

Our dedication to thorough evaluation, ongoing learning, and reflective practice empowers us to continually enhance our approach and meet the evolving needs of the communities we support. This commitment will continue to reinforce our position as trusted experts in the field.”

Key findings of the Impact Report 2024/2025:

- 1,324,416 estimated young people reach through delegates trained.

- 9,448 delegates trained in positions of care and influence over young people, including 3,762 teachers and youth workers.

- 1 million social media impressions, marking 322% increase from 2023.

- 97% of delegates would recommend Ygam training to a colleague.

- 97% of delegates felt better equipped to identify and respond to gambling harms following Ygam training.

- 50% of teachers and youth workers said they had implemented the Ygam materials in their classroom within 12 months of completing the training.

- 2,134 volunteer leaders were reached through the Scout Association partnership, safeguarding an estimated 45,000 children and young people.

- 50 universities visited across the UK.

- 115,000 estimated university students reach.

This report reinforces the charity’s commitment to independent evaluation, learning, and reflection, which helps to continuously strengthen their our own portfolio and harm prevention efforts across the wider sector.

You can read Ygam’s full Impact Report 2024-5 here.

The post Report affirms Ygam’s leading role in gambling harm prevention appeared first on European Gaming Industry News.

Latest News

Midnite named principal partner of Sheffield United

- Midnite to be Blades’ front-of-shirt sponsor for 2025-26 season

- Lucky season ticket holders will get VIP seat upgrade for every match thanks to Midnite

- Midnite is among UK’s fastest-growing sportsbooks.

Midnite, one of the UK’s fastest-growing online sportsbooks, has been named as the principal partner of Sheffield United for the 2025-26 season.

Midnite’s logo will be on the front of Blades shirts for the men’s and women’s adult first teams, training kits and adult replica shirts. It will also be displayed around Bramall Lane, on home match team sheets, the matchday programme and across the club’s social media channels.

The partnership also launches Midnite Premium Seat Upgrade. This will see two lucky season ticket holders drawn at random before each men’s home league game to enjoy a premium match experience in the prestigious Tony Currie Suite.

Midnite and Sheffield United will also collaborate to bring Blades fans closer to their club with a series of unique moments and unprecedented opportunities throughout the season.

Midnite was the official betting partner of the 2025 World Snooker Championship, held at the Crucible in Sheffield earlier this year.

Jonathan Shaw, Vice President of Growth at Midnite, said: “It’s a privilege for Midnite to become principal partner of Sheffield United for the coming season. The club has a proud history and a strong connection with its supporters and we’re committed to helping make this a memorable season for Blades fans.

“This partnership continues our efforts to grow Midnite as a challenger brand in the UK market, as we look to build our presence and offer a genuine alternative to the established tier-one operators. We’re looking forward to working with the club and its supporters throughout the season.”

Paul Fielder Head of Commercial for Sheffield United Football Club said: “We’re pleased to welcome Midnite as our principal partner for the 2025-26 season. They’ve shown a clear commitment to working with the club and its supporters and we’ve been impressed by their thoughtful and collaborative approach.

“We look forward to developing the partnership over the season and providing Blades fans with some memorable moments along the way.”

Midnite and Sheffield United’s partnership will operate in accordance with the Gambling Commission’s codes of practice.

The post Midnite named principal partner of Sheffield United appeared first on European Gaming Industry News.

Latest News

Unlock Top-Tier Deals and Careers: Parimatch joins iGB L!VE 2025

Parimatch, the global entertainment company, is set to make a significant impact at iGB L!VE 2025, taking place in London from July 2–3. Located at Stand E34, the Parimatch team will welcome industry leaders, potential partners, and top talent to explore a world of premium entertainment opportunities.

iGB L!VE is a cornerstone event in the iGaming calendar. That is why Parimatch is creating a hub for high-value connections with key decision-makers. The stand will be a must-visit destination for attendees seeking access to a top-tier network of C-level executives and the best deals from an Affiliate program operating globally across the Middle East, Southeast Asia, and Europe. The Parimatch Affiliate team will be on hand to discuss the best deals and hottest offers, designed to drive high performance for partners.

The Parimatch experience at Stand E34 is designed to be unforgettable, going beyond performance to build strong alliances and celebrate shared success. Demonstrating a commitment that goes beyond industry standards, Parimatch Affiliates will host an exclusive side event for its top partners: a trip to the Formula 1 race in Silverstone. This ethos will be reflected at the stand through a dynamic atmosphere where insights and energy converge, complete with engaging activities, prize draws, and limited-edition merchandise drops.

In addition to fostering business objectives, Parimatch is focusing more than ever on its employer brand. The company’s Employer Brand and HR teams will be on-site for open conversations with talented professionals. Visitors can gain direct insights into Parimatch’s vibrant corporate culture, diverse work formats, and significant career opportunities. This provides a transparent look into life at a leading global entertainment company.

The post Unlock Top-Tier Deals and Careers: Parimatch joins iGB L!VE 2025 appeared first on European Gaming Industry News.

-

Latest News7 days ago

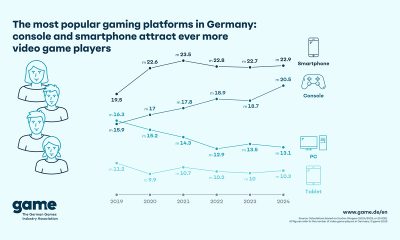

Latest News7 days agoThe Most Popular Gaming Platforms in Germany: Console and Smartphone Attract Ever More Video Game Players

-

Balkans7 days ago

Balkans7 days agoSYNOT Partners with Efbet in Bulgaria

-

Asia7 days ago

Asia7 days agoFIFA, NBA, UFC and More Sports Events Go Live – Crypto Sportsbook BETY Offers Global Sports Betting Coverage 2025

-

Australia6 days ago

Australia6 days agoVGCCC: Minors Exposed to Gambling at ALH Venues

-

EveryMatrix Press Releases6 days ago

EveryMatrix Press Releases6 days agoSlotMatrix unleashes divine riches in Fortuna Gold where gods rule the reels

-

Brazil7 days ago

Brazil7 days agoTG Lab unveils new Brazil office to further cement position as market’s most localised platform

-

Africa6 days ago

Africa6 days agopawaTech strengthens its integrity commitment with membership of the International Betting Integrity Association

-

Baltics6 days ago

Baltics6 days agoEstonian start-up vows to revolutionise iGaming customer support with AI